Ambient Light Sensor is one of the more interesting data sources from privacy point of view. I recently highlighted a number of potential privacy issues in my analysis.

I described how the ability to access and track the changes of lighting conditions in the user's environment might contribute to security and privacy issues such as:

- Information leaks

- Profiling user's behavior (behavioral analysis)

- Cross-device linking, tracking

- Tracking the distance the user has travelled

- Cross-device communication (out-of-band communication channel)

Additionally, I identified and filled Firefox and Chromium privacy issues relating to ambient light sensors; they are being addressed.

I also introduced my new project, SensorsPrivacy.

In this post I describe two specific possible security and privacy risks of Ambient Light Sensors. The first one is exploiting (active) user behavioral monitoring and subtle behavioral changes, and the second one is focusing on (passive) inference of environmental changes not due to user interactions.

Stealing bank PINs via Light Sensors

Smartphone Ambient Light Sensor readout is affected by a number of aspects. One particularly important is user's behaviour. Even a tiny smartphone movement can affect the light level readout.

It turns out that one of the consequences of side information channel offered by light sensor is the ability of stealing credentials such as bank PIN, as highlighted in Spreitzer's work; I will describe the outcome of the work.

How a malicious application could steal the user's banking PIN with a light sensor?

Light sensor data is not unambiguously related to PIN's digits. It's not that a particular PIN's digit resembles a particular light level; the matter is more subtle. According to the report, the information leak is emanating from the user behavioral analysis. The employed threat scenario envisions users using a specialized application monitoring the typing on a touchscreen. The application is trying to trick users to reveal their use patterns (how they type) in an activity similar to PIN typing. The application tracks lighting conditions and the rate of light level change (timestamped) when the user is typing, for later analysis of the light level change rate (e.g. speed). Light level variations are typically related to subtle angle changes caused by slight differences of the way how the device is held. You know, when you type on a smartphone, it tends to move slightly.

Then, the application waits (or tricks the user to do so) for a banking application start. Lighting conditions are still monitored. But at this point, user's use patterns (which affect the rate sensor readout changes) are already known. The research studied the mechanics of PIN deducing.

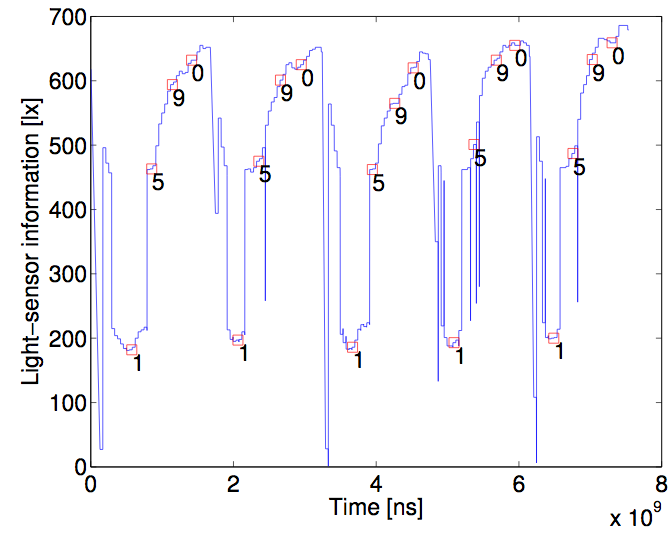

The image below (from the report) shows how particular PIN digits correlated with light level changes.

It is quite clearly seen that in this particular case, the digits 0 and 9 were related with higher light level readouts; they could be clearly distinguished from others. A machine learning algorithm would have no problems in classifying these events.

Light Sensor reveals individually-attributable information?

One of the other interesting observations from the paper is the ability of user traits detection. The paper reports how left-handed users apparently tilt the device to the left side when typing PIN digits in the middle and right columns of the input key pad, and right-handed users tended to tilt the smartphone to the right! This observation shows how it's possible to detect and distinguish between left and right handed people via the light sensor.

While the particular threat model considered in the paper and the results could be a matter of debate, this is not the point of my note. I wanted to draw attention to the fact how light sensor readout allows one to infer data about the user's behaviour.

I would say that light sensor is able to detect subtle hand shaking, possibly revealing information about the condition of a user or his/her risk of developing a condition, possibly other aspects, too.

Detecting TV program via Light Sensors

Another relevant work I wanted to highlight is a report by Schwittmann and others. This work specifically mentions W3C Ambient Light Sensors API that I analyzed in a previous note. The research describes a vector for detection of a video displayed on a TV.

How is it done? Researches placed a smartphone on a table close to a TV, to simulate normal conditions of users watching a video. Three scenarios were analyzed: smartphone facing: the user, the TV, and the ceiling. The objective was to detect the video played on the TV. Smartphone was tracking the rate of light level changes (illuminance) and sending the data to a server, for later analysis; in this respect, the setting similar to the approach of a report described previously. Smartphones were reading light conditions via W3C Ambient Light Sensors API.

The researchers decomposed video frames used in their experiments, and looked for correlations with illuminance reported by a smartphone. Different TV programs were used to validate the study. It turned out that illuminance provided clues about the watched TV programs; all of the TV programs were detected successfully. Professional YouTube productions were also detected with high accuracy (92%), although detection rate for amateur productions turned out to be worse.

Note that keeping the smartphone far from the TV was not necessarily a protection measure, as light can be reflected from walls, and chances are that your smartphone is equipped with a light sensor able to detect light even from the distance of a few meters (you can check this out on SensorsPrivacy project!). The exposition time also counts in: the longer the smartphone is reading and providing illuminance data, the better was the recognition.

So perhaps it's time to put a smartphone with its touch screen facing the table next time when you watch a movie? Another option could be to set lighting conditions in your room to be high (i.e. 30k-100k lux), but it's not clear if you'd like to sit in such conditions all the time!

I don't think so. And more seriously, let's just analyze, build and design technologies with privacy in mind. We have only recently started paying attention to those aspects.

Summary

Web developers will need to consider possible passive and active side channels, also arising due to the use of sensors.

However, another interesting aspect to consider is that if the data provided by some sensors are sensitive and convey so much information, aren't they encompassed by EU's General Data Protection Regulation? In such case, web privacy risk and impact assessments might become a standard practice development applied to web, mobile, Internet of Things and Web of Things applications. Privacy engineering process is the way to go.

These are simply different type of issues than matters of introducing permissions to access Ambient Light Sensors.