In 2017 I assessed the privacy of ambient light sensors. Further research demonstrated the ability to steal user private data via the light sensor. This was a risk that challenged the cornerstone of web security and privacy (single-origin policy). My work led to broader conclusions about the design of technology with privacy in mind.

Now research work published in Science vindicates my work. The paper further explores the privacy footprint of light sensors. The researchers demonstrate the capture of images of the environment in front of the screen using sensors. It is shown how the ambient light sensor allows the reconstruction of images of the surrounding places, including user faces (even if with low resolution, or highly blurred). Why is this a problem? Because it does not use a smartphone/notebook camera, something obvious for picturing the surroundings.

Privacy constraints

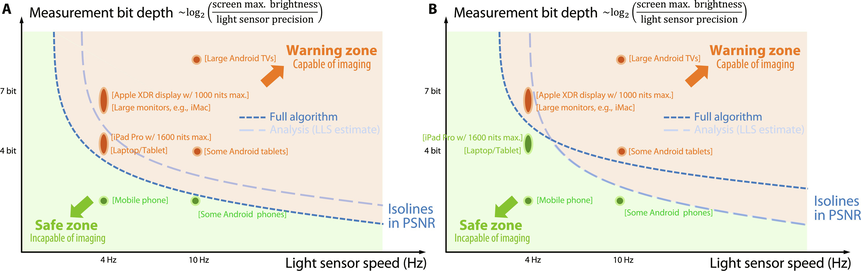

The crucial value of this work is establishing clear constraints about the safety of parameters like: frequency and illuminance when misuses may be possible. The research work indicated that low-resolution, low-frequency acquisition (10-20 Hz sensors…) required minutes to reconstruct a 32x32 pixel image. That’s a lot and too long for practical significance, but tailored attacks still cannot be ruled out. The assessment puts constraints on information gathering. If the ambient light sensor is quantized to 4 bits (0-15 lux), the reconstructed information becomes impossible to decipher. Still, some devices like Smart TVs are in the unsafe zone.

I appreciate the safety riddle because the selection of privacy thresholds for the W3C Ambient Light Sensor, work faced in the W3C Device and Sensors Working Group, exactly the same problem occurred, and similar tests were performed.

Privacy by Design and GDPR State-of-the-art

Such considerations are useful for future technology (hardware, software) development. This is about the threshold not to be surpassed or else misuses may become too risky. This work therefore serves as a crucial reference for any privacy impact assessment, or state-of-the-art assessment, considering the GDPR Data Protection by Design principle. In a sense, designers of smartphones, smartwatches, and other products of this kind must take this and the prior works into account. This is how the “state-of-the-art” clause of modern regulations like GDPR functions. Designers, and privacy analysts, must not ignore such a level of existing knowledge or insight. If, alternatively, they would ignore it, data protection authorities have justified reasons to raise objections. Even issue GDPR fines or bans on processing, in response to clear ignorance of the state-of-the-art. Data protection aspects of technology design is a regulated territory.

Meaningful explanations of what the GDPR "State-of-the-art" clause means in practice isn't that easy to find. So you'll likely find this useful as an interpretative case study.

The impact of privacy analysis of ambient light sensor

Partly following my work, ambient light sensor access is now gated with a browser permission and is also subject to permission-policy mechanism – the feature can be disabled on sensitive sites.

Privacy recommendations

The said paper restates (unacknowledged the prior works, though my paper is cited) what we know, and what is already implemented/specified:

“From the software side, the imaging threats that we have revealed suggest two mitigation strategies: tighten permission controls and reduce the information output by the sensor. Restricting access to the screen is probably not realistic, but the lack of permission to access the ambient light sensor may need to be rethought. Second, the precision and speed of the ambient light sensor should be reduced in its application programming interface … Accordingly, we propose quantizing the sensor output more (e.g., at a step of 10 lux), reducing speed (e.g., 1 to 5 Hz)”.

It so happens that with the W3C Ambient Light Sensor we did that already, few years ago:

“In order to reduce accuracy of sensor readings, this specification defines a threshold check algorithm (the ambient light threshold check algorithm) and a reading quantization algorithm (the ambient light reading quantization algorithm). … Choosing an illuminance rounding multiple requires balancing not exposing readouts that are too precise … The illuminance threshold value is used to prevent leaking the fact that readings are hovering around a particular value but getting quantized to different values.

These algorithms make use of the illuminance rounding multiple and the illuminance threshold value”

Last words

The mentioned paper work confirms and vindicates my work and recommendations stated during the time of privacy analysis of the Ambient Light Sector. This stuff is now in your web browser.

As a side comment, that is another scientific work confirming my prior works in this domain. Nice feeling.